AI Governance in the Age of Agents

Table of Contents

We are entering an era defined not just by AI models, but by AI agents — autonomous systems that perceive, reason, and act in the world. As these capabilities expand, so too does the responsibility to govern them. This article explores what it really means to direct, monitor, and manage AI in a world of agents: the frameworks, the risks, the lifecycle models, and the tooling capabilities every enterprise needs to think about.

What Is AI Governance?

At its core, AI governance is the process of directing, monitoring, and managing the AI activities of an organization through automation. That definition sounds deceptively simple. In practice, it spans every stakeholder — from the data scientist building a model to the compliance officer accountable for its regulatory footprint — and every decision made along the way.

“AI Governance enables organizations to align AI systems with business, legal, and ethical requirements at every stage of the lifecycle of AI models of any type.”

A mature governance platform should be built on three pillars: centralized AI lifecycle management (tracking every model, app, and agent from idea to retirement), proactive risk and security management (detecting and mitigating threats before they materialize), and trustworthy dynamic compliance (maintaining regulatory alignment as rules evolve). Critically, it must be platform-agnostic — capable of governing AI deployed across any cloud, any vendor, any model provider.

Governance in Practice: Macro and Micro

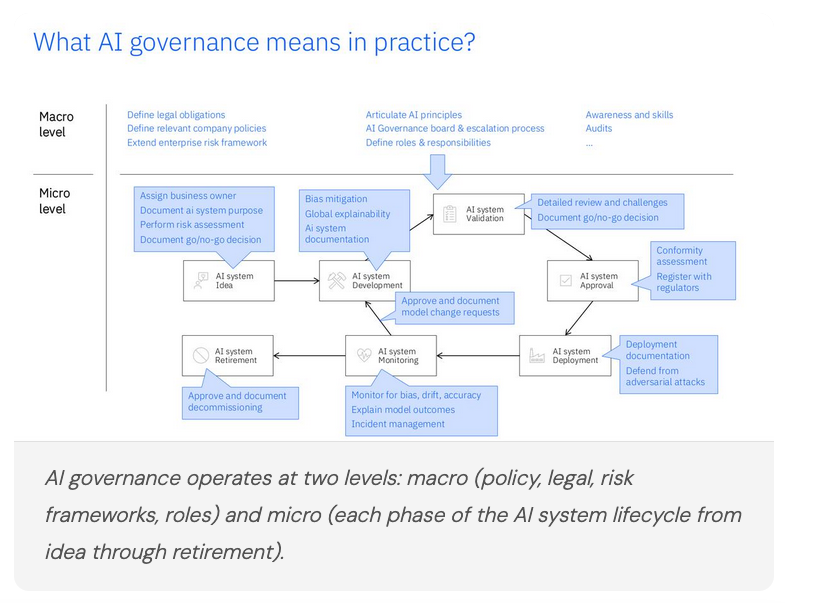

One of the most useful frameworks for operationalizing AI governance is the distinction between macro-level and micro-level governance — and understanding that organizations need both running simultaneously.

At the macro level, governance means articulating AI principles, defining legal obligations, extending the enterprise risk framework, establishing an AI governance board with clear escalation paths, and building organization-wide awareness and skills. These are the guardrails that frame everything else.

At the micro level, governance plays out across the full AI system lifecycle — from ideation and development through deployment, monitoring, and eventual retirement. Each phase carries specific requirements: risk assessments, bias mitigation, explainability documentation, go/no-go decisions, conformity assessments, regulatory registration, adversarial defense, and incident management. Governance is not a one-time gate — it is a continuous loop.

The Risk Landscape: Traditional, Amplified, and New

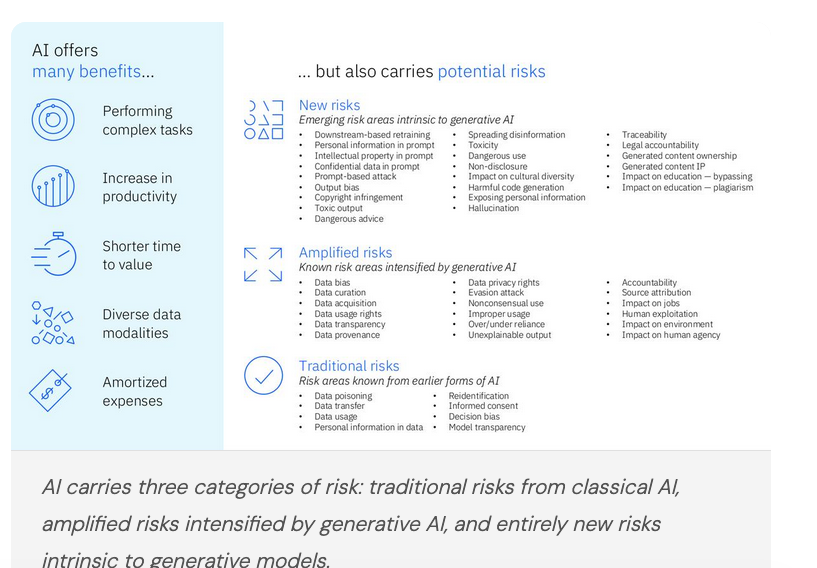

AI’s benefits — productivity gains, shorter time-to-value, the ability to process diverse data modalities, amortized operational costs — are compelling. But they come alongside a significant and growing risk surface that organizations must actively manage.

Traditional Risks:

- Data poisoning

- Decision bias

- Model transparency

- Personal data in training

- Re-identification

Amplified by GenAI:

- Data bias & curation

- Unexplainable output

- Over/under reliance

- Source attribution

- Impact on jobs & agency

New Risks (GenAI):

- Hallucination

- Prompt-based attacks

- Copyright infringement

- Toxic output

- Legal accountability

The Agent Dimension: A New Risk Frontier

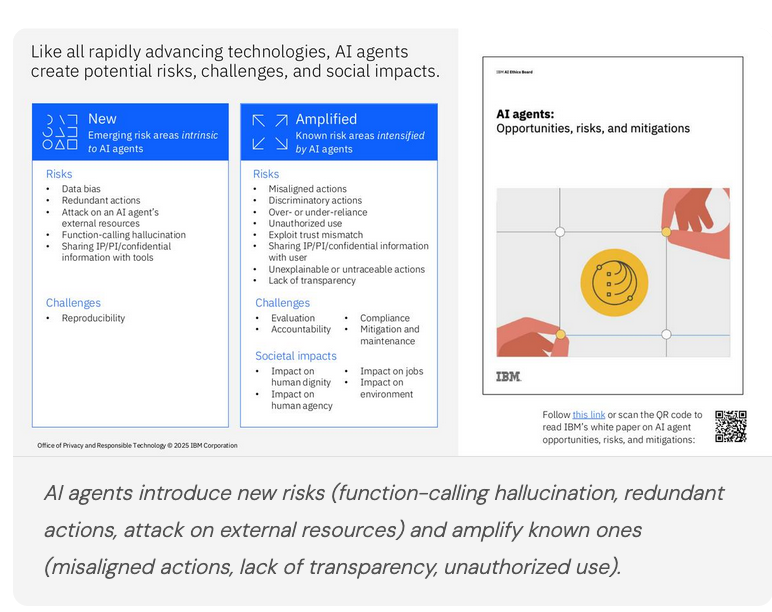

If generative AI expanded the risk surface, agentic AI is expanding it further — and in qualitatively different ways. Agents don’t just respond; they act. They call tools, take sequences of decisions, interact with external systems, and can cascade errors in ways that are difficult to trace or reverse.

Responsible AI research has identified three categories of agent-specific concerns:

- New risks intrinsic to agents — including function-calling hallucination, redundant actions, and attacks targeting an agent’s external resources

- Amplified risks — misaligned or discriminatory actions, unauthorized use, trust mismatch exploitation, unexplainable or untraceable decision chains

- Societal impacts — effects on human dignity, agency, employment, and the environment

The governance challenge unique to agents is the accountability gap: when a multi-step autonomous workflow produces a harmful outcome, who is responsible, and how do you trace the causal chain back through dozens of tool calls?

“When agents act autonomously across systems, governance can’t be an afterthought — it has to be embedded in the architecture.”

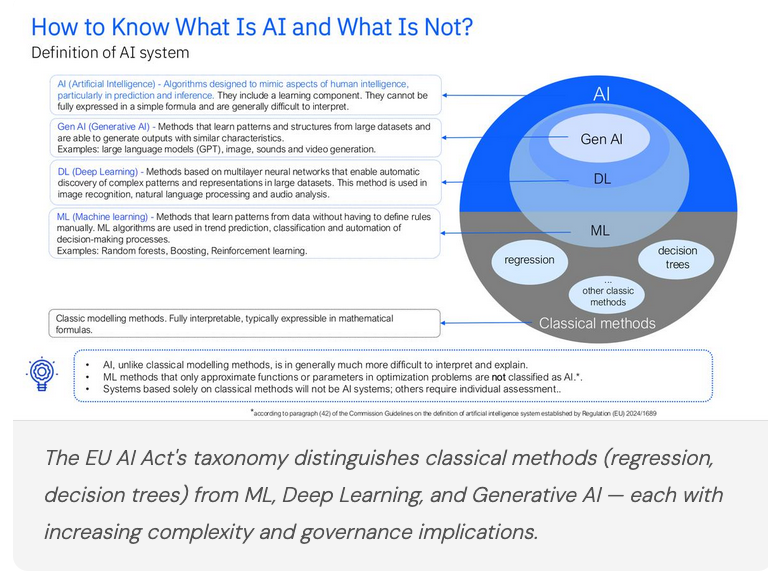

What Counts as AI? The EU AI Act’s Taxonomy

Before you can govern AI, you need to know what it is — and the EU AI Act has introduced the most comprehensive legal definition to date. Not every algorithm is an AI system under the Act, and the distinction matters enormously for compliance obligations.

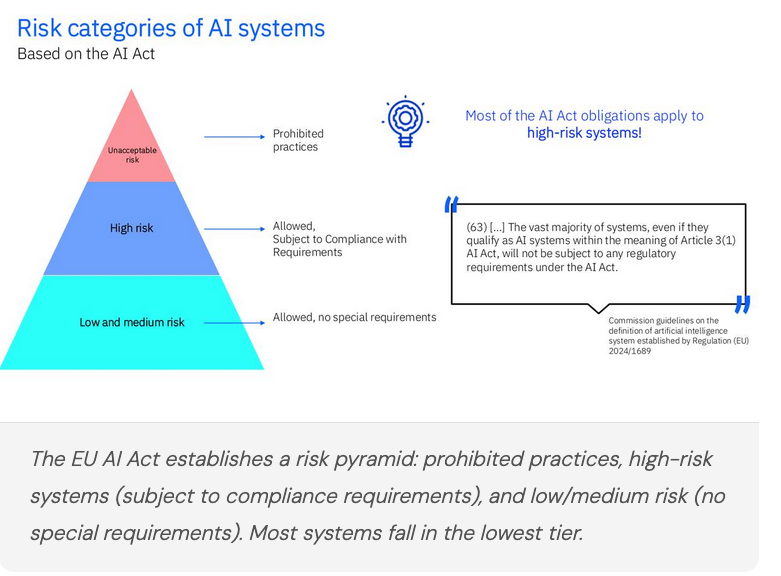

Classical statistical methods — regression, decision trees — sit outside the AI definition when they merely approximate functions or parameters in optimization problems. Machine learning, deep learning, and generative AI fall inside it. The key criterion is whether the system includes a learning component and is generally difficult to interpret. This matters because the vast majority of AI Act obligations apply specifically to high-risk AI systems — and most deployed systems won’t meet that threshold.

One Size Does Not Fit All: ML vs. GenAI Governance

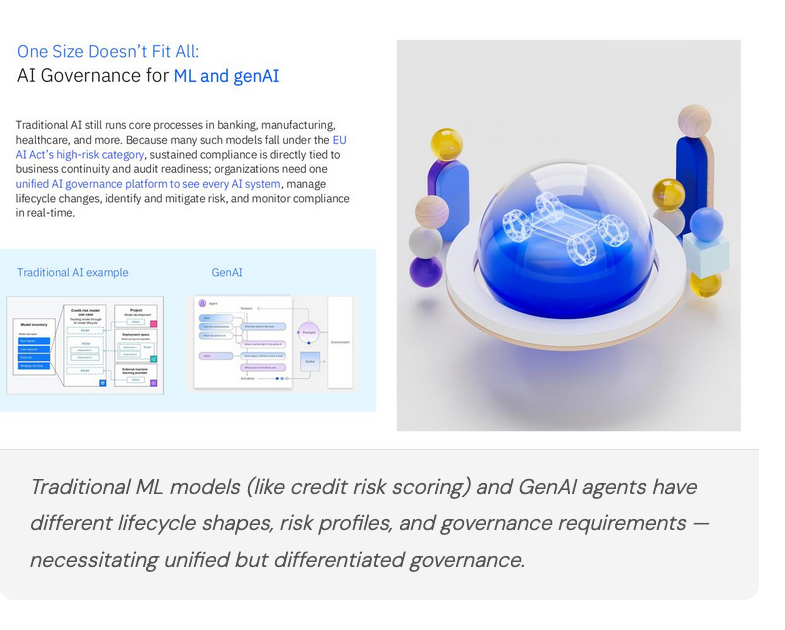

A recurring theme in mature AI governance programs is that traditional ML models and generative AI models require fundamentally different governance approaches — even as they sit within the same organizational inventory.

Traditional ML — credit risk scoring, loan approval, mortgage servicing — often runs core business processes in banking and healthcare. Many of these models fall squarely in the EU AI Act’s high-risk category, meaning sustained compliance is tied directly to business continuity. Organizations need lifecycle traceability, bias monitoring, and audit-ready documentation baked into operations.

GenAI governance introduces a different set of challenges: foundation model selection criteria, prompt lifecycle management, output quality metrics that don’t exist for classical models, and a much fuzzier boundary between “the system” and “the user.” Treating both with the same governance model leads to either over-burdening GenAI projects or under-governing high-stakes ML deployments.

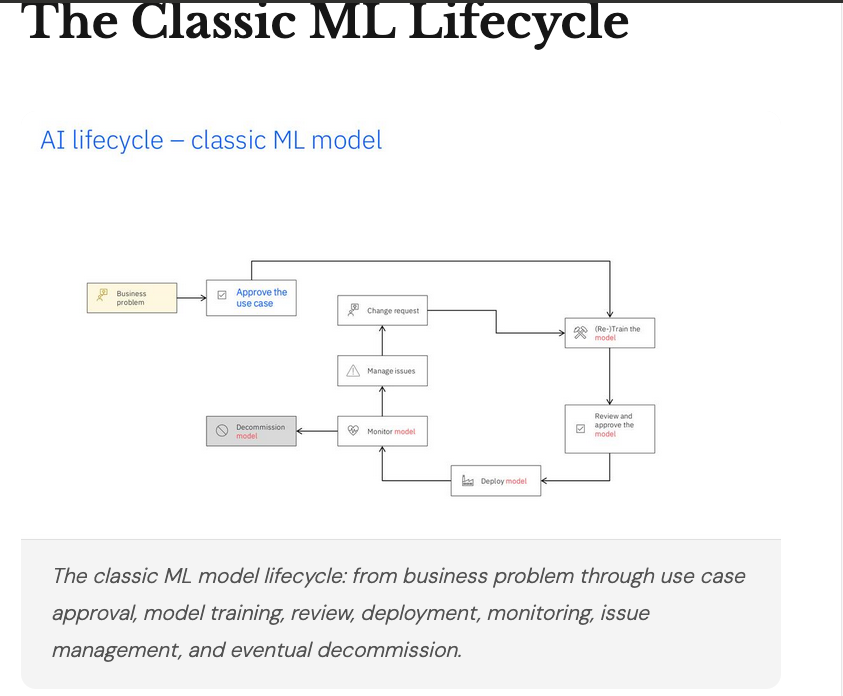

The Classic ML Lifecycle

For traditional ML, the lifecycle is well-understood: a business problem drives use case approval, model training, review, deployment, continuous monitoring, and eventual decommission — with change request loops connecting these phases. Each node in this lifecycle is a governance checkpoint: documented decisions, risk assessments, bias evaluations, and explainability requirements. The key discipline is making sure none of these checkpoints are skipped under delivery pressure.

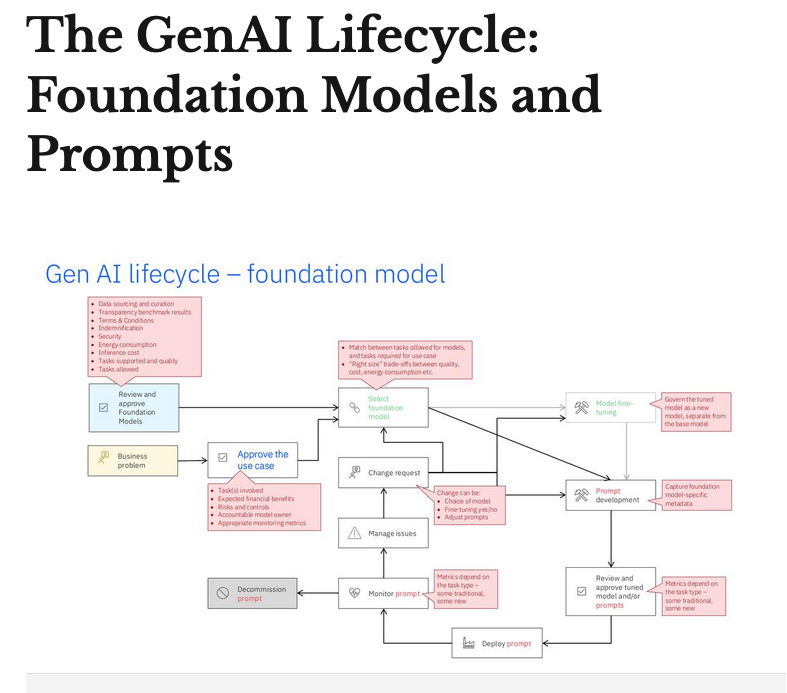

The GenAI Lifecycle

For generative AI, the lifecycle looks different. It begins with foundation model review — evaluating candidates against security, performance, cost, and alignment criteria — before use case selection. Prompt engineering becomes a governed activity, with version-controlled prompts and systematic evaluation. Retrieval-augmented generation (RAG) introduces a retrieval layer that must be evaluated independently. And the evaluation criteria themselves — faithfulness, groundedness, context relevance, answer relevance, retrieval precision — are entirely new. Every change — model swap, fine-tuning decision, prompt adjustment — triggers its own review cycle.

RAG Governance in Practice: A Real-World Example

A concrete example makes the governance challenge tangible. Consider a RAG recruitment assistant: a candidate submits a question; the system retrieves relevant document chunks from a knowledge base; the combined question and context is sent to a foundation model; and the full transaction — question, retrieved context, and answer — is logged to a governance platform for audit and evaluation.

What makes this architecture governance-ready is the logging layer. Every inference is captured, enabling retrieval quality and faithfulness evaluation at any point. A governance dashboard can surface retrieval precision, context relevance, and average precision metrics — along with threshold violation distributions across transactions — giving compliance and operations teams the visibility they need.

Drilling into individual transactions, governance operators can see faithfulness and answer relevance scores alongside source highlights — color-coded green for faithful passages, orange for somewhat faithful, red for unfaithful. This is the kind of per-inference traceability that regulators and audit committees are beginning to demand.

Agentic AI Governance: Three Capabilities Every Platform Needs

For agentic AI specifically, a governance platform needs to go well beyond model-level monitoring. Three distinct capabilities are required.

1. Agent Onboarding and Lifecycle Governance

Before an agent is deployed, it must be onboarded through a structured process: documenting the business goal, completing a risk questionnaire that identifies regulatory compliance needs, assigning a business owner, and obtaining cross-functional stakeholder approval. The output should be a documented risk assessment — covering risks like hallucination, toxic output, and prompt injection — with inherent and residual risk ratings for each dimension.

2. Agent Evaluation

Evaluating agents is fundamentally harder than evaluating static models. Agents execute multi-step workflows — evaluation must capture performance across the entire chain, not just the final output. Robust evaluation frameworks integrate with agent orchestration layers, measuring metrics like task completion rate, tool call accuracy, and contextual faithfulness across workflow configurations. Root cause analysis on underperforming runs is essential for iterative improvement.

3. A Managed Tool Catalog

One of the most underappreciated governance challenges in multi-agent systems is tool sprawl — organizations accumulating dozens of overlapping, inconsistently documented tool implementations across teams. A managed tool catalog addresses this with a consolidated, searchable inventory of approved tools: lineage tracking, quality metrics, usage statistics, and side-by-side comparison capabilities. Promoting approved tools reduces redundant security reviews and enforces consistent quality standards across every agent project.

Security: Tackling Shadow AI

Governance without security is incomplete. One of the highest-risk categories in enterprise AI today is Shadow AI — models, applications, and agents running in the organization’s cloud or network entirely outside formal governance processes.

An AI security layer integrated into the governance platform should automatically discover shadow AI deployments and surface them for formal onboarding. Beyond discovery, it needs to provide security compliance validation, vulnerability detection, and real-time guardrails at inference time.

Specifically, a mature AI security capability should validate compliance against major regulatory libraries (EU AI Act, ISO 42001, NIST AI RMF, OWASP), detect and help remediate high-risk vulnerabilities through automated penetration testing, and provide a real-time guardrails library for continuous runtime monitoring of AI security risks.

Compliance Acceleration

The compliance function in AI governance is often the most time-consuming element — and the one most at risk of becoming a bottleneck as AI deployments scale. The answer is automation: mapping AI use cases to applicable regulations automatically, identifying compliance gaps proactively based on use case characteristics and deployment context, and providing configurable assessment workflows that serve both AI owners and compliance professionals efficiently. The target regulatory landscape includes the EU AI Act, ISO 42001, NIST AI RMF, and a growing body of regional and sector-specific rules.

The General-Purpose AI Code of Practice

At the industry level, the EU’s General-Purpose AI (GPAI) Code of Practice represents a significant step toward voluntary harmonization before mandatory enforcement kicks in. The code covers three chapters: Transparency, Copyright, and Safety and Security. Major AI providers — including Amazon, Anthropic, Google, Microsoft, Mistral AI, OpenAI, ServiceNow, and many others — have signed on, demonstrating that the industry recognizes the value of proactive governance commitments rather than waiting for enforcement pressure.

Key Conclusions

Experience across enterprise AI deployments — at scale, across regulated industries — consistently surfaces the same hard-won lessons:

- The algorithm alone doesn’t define the system. Context, deployment purpose, and real-world impact determine governance obligations more than the underlying method.

- Interdisciplinary teams are mandatory. Legal, technology, business, compliance, and security must collaborate — governance siloed in any single function will fail.

- Business value must be defined first. A prerequisite for operating any AI system responsibly is articulating what it’s supposed to accomplish and how success is measured.

- Data is the foundation. Quality, availability, and security of training and inference data underpin every other governance capability.

- New risks are hard to predict. Continuous monitoring isn’t optional — the pace of change in AI capabilities means yesterday’s risk model is already incomplete.

- Risk challenges are shared across industries. Poor data quality, integration failures, insufficient model performance, business resistance, and over-reliance on external model providers are universal problems requiring collective attention.

“The goal isn’t to slow AI down. It’s to build the trust infrastructure that lets organizations scale AI with confidence.”

The age of agents is here. Governing it well is the defining enterprise AI challenge of the next decade — and the organizations that invest in governance architecture now will be the ones who can move fastest and farthest, with the confidence that comes from knowing their systems are transparent, traceable, and trustworthy.

Related Episodes

Dive deeper into these topics in the podcast.

AI Agents.

The episode explores the rise of AI agents, their evolution from chatbots, and the challenges and opportunities in deploying and scaling AI agents. It delves into the characteristics of AI agents, ...

The Man Behind IBM's AI Agent Gateway

Mihai Criveti, Distinguished Engineer at IBM and creator of Context Forge, on why AI agents need agentic middleware, MCP's enterprise gaps, and what production-grade agent architecture actually loo...

Enjoying this article?

Ship AI is a video podcast covering the trends, tools, and strategies driving enterprise AI. New episodes every two weeks.